What Americans think about artificial intelligence

Results from the "Pulse" survey

Research authors: Jamie Elsey, David Moss

We have recently released findings from the second wave of our “Pulse” project, tracking American attitudes towards effective giving and impactful causes. This latest wave of Pulse, conducted between February and April 2025 (about six months after the first wave, which began in July and ended in September of 2024), polled approximately 5,600 adults in the US. In this summary, we report results focused solely on attitudes towards artificial intelligence (AI). Upcoming articles will focus on broader perceptions of impactful causes and on donation behaviors. See our recent summary of US attitudes towards Effective Altruism here.

Why this research matters

As AI systems become increasingly integrated into daily life, through large language models such as ChatGPT, many decision-makers need data on how Americans perceive both the risks and benefits of these technologies:

For AI safety organizations and researchers, these insights can help identify receptive audiences for safety-focused policy proposals, informing strategic communications.

For policymakers and regulators in the US, understanding the concerns about AI risks that Americans across party lines share highlights opportunities for bipartisan policy solutions.

For the technology sector, these results provide a temperature check on public trust and highlight areas where transparency and safety measures might address public concerns.

Key findings

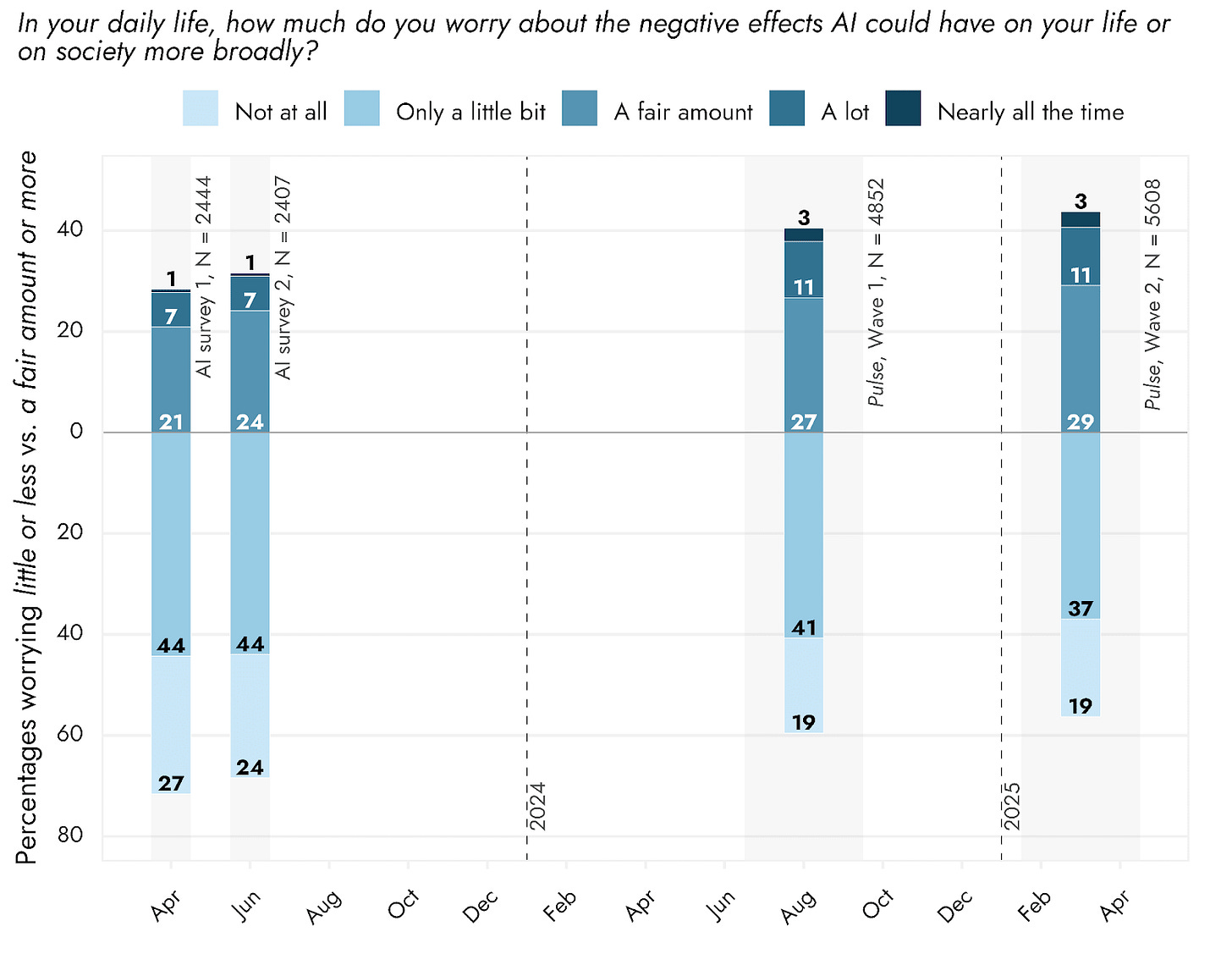

Worry about AI may be plateauing.

Respondents’ worry about AI has been climbing since 2023. When we compare responses from 2025 to our 2023 baseline, between 57 to 59% of US adults would be expected to report higher levels of concern now than they would have two years ago. Conversely, 71% of respondents reported little or no worry about AI in 2023, compared to 56% most recently.

The increase in concern between 2024 and 2025 appears to be slowing. From 2024 to 2025, worry has only marginally increased: slightly fewer respondents said they worry “only a little bit” (dropping from 41% to 37%), while more reported worrying “a fair amount” (rising from 27% to 29%). This potential plateauing may reflect the normalization of AI tools in everyday life.

Women rather than men, those with lower rather than higher household income, Democrats rather than Republicans, and non-white rather than white respondents tend to worry more about AI in general.

Figure 1: Worry about AI among US adults

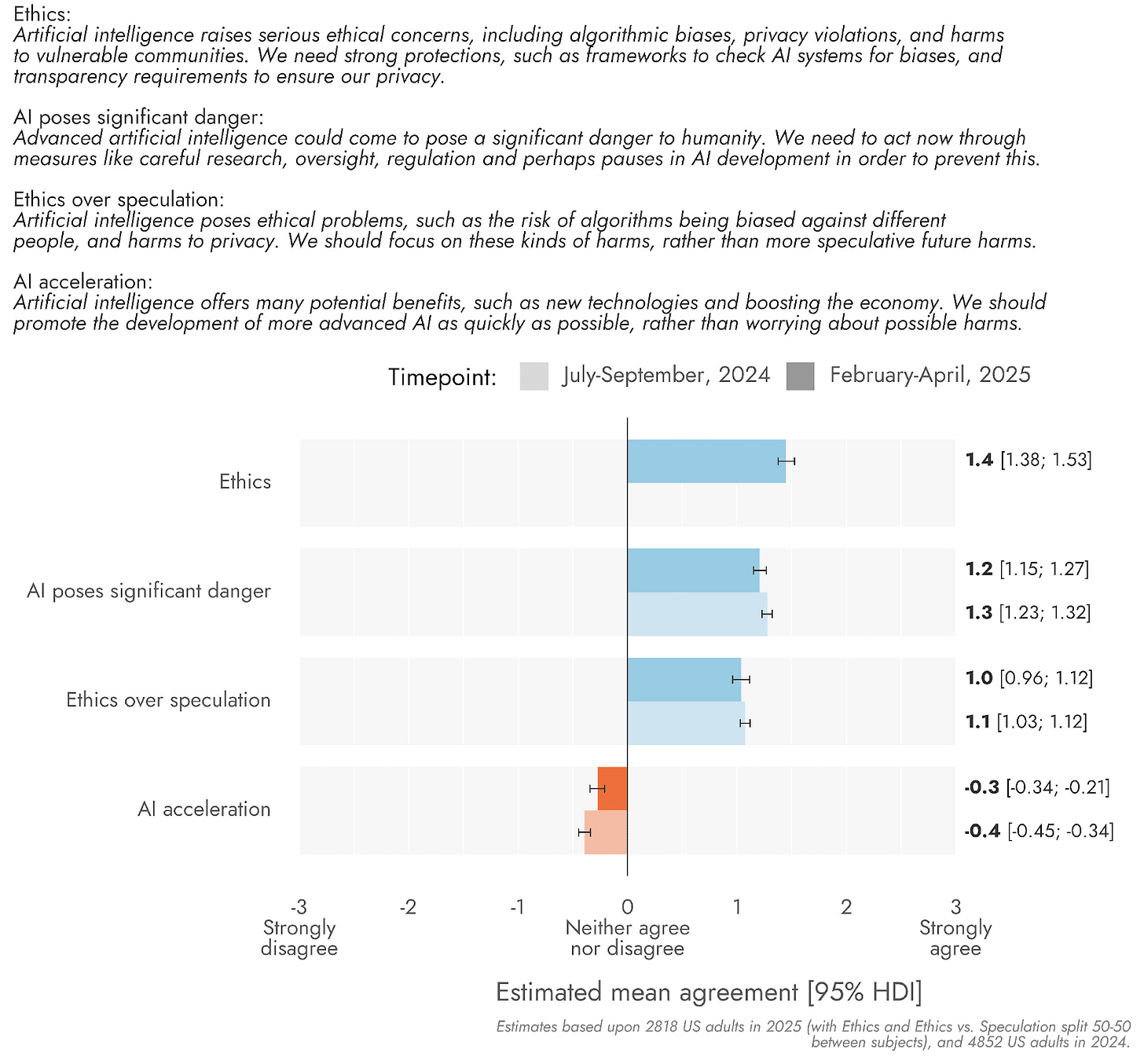

Americans favor caution over acceleration, and this is true across the political spectrum.

When presented with different stances on AI development, respondents most agreed with statements expressing concern over AI risks compared to those expressing enthusiasm for an acceleration of AI technologies. They notably:

Agreed on average with the following statements: “AI poses ethical concerns” about algorithmic biases, privacy violations, and similar issues (scoring 1.4 on a scale from -3 to +3); “AI poses significant danger to humanity“ (scoring 1.2); and, “AI poses ethical risks that are concerning, and we should focus on these risks rather than more speculative ones” (scoring 1.0).

On average, disagreed with the idea of accelerating AI development to maximize benefits while minimizing focus on risks (scoring -0.3).

Overall, we estimate that over two-thirds of US adults would favor risk-focused perspectives over acceleration.

Respondents of all partisan groups (Democrat, Republican, and Independent) on average lean negatively on AI acceleration, and positively on AI risk concerns of all types (ethical and more speculative). Nonetheless, Republicans leaned respectively less negatively on AI acceleration and less positively on AI risk concerns, than their Democrat or Independent counterparts.

Figure 2: US adults’ agreement with different AI-related perspectives

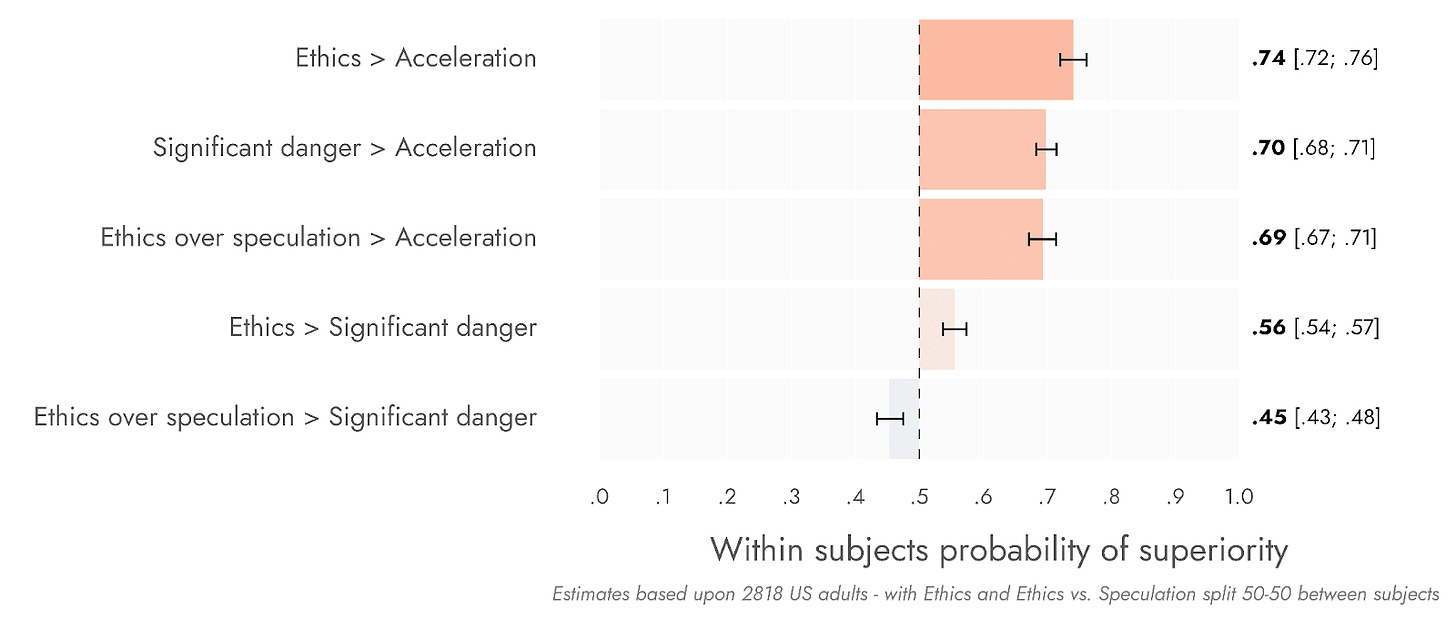

Concern over “AI ethics” (near-term harms like bias) and “AI safety” (more speculative harms to humanity) correlate.

Although respondents on average agreed with a statement explicitly framing ethics as more important than “speculative” risks (see figure above), those who agreed were still likely to agree that AI poses significant dangers to humanity.

When we removed the comparative framing and simply asked about AI ethics, agreement with the statement increased, and there was even stronger correlation with concerns about more speculative harms.

These results suggest a broad tendency to be concerned about multiple AI risks at a time, rather than the potentially more siloed attitudes of advocates in the AI Safety and AI Ethics spaces.

Figure 3: Within-subjects probability of superiority of agreement with selected AI-related perspectives over others

Read the full report

The complete analysis, including detailed demographic breakdowns and methodological notes, is available on the EA Forum. Stay tuned for additional Wave 2 findings on other cause areas, and look for Wave 3 results later in 2025.

Acknowledgements

Thank you to Elisa Autric for writing the research summary for this Substack, Jamie Elsey for review, and Thais Jacomassi for copyediting.

Thank you!

Thank you for taking the time to read our Substack. If you would like to support our efforts, please subscribe below or share our posts with friends and colleagues.

We’re also always looking for feedback on our work. You can share your thoughts about this publication anonymously or simply reply to this email/post with suggestions for improvement or any questions.

By default, we’re sharing this Substack via email with Rethink Priorities newsletter members. Please feel free to unsubscribe from this Substack if you’d prefer to stick with our monthly, general newsletter.

I'm confused as to why the survey gives concrete examples of issues in AI ethics, but leaves speculative risks abstract "advanced AI could come and pose significant danger to humanity." For the latter it seems simple enough to say "help rogue actors design and deploy weapons of mass destruction, or strip humans of control over the world's resources in AI systems' pursuit of their own goals"